Somewhere between prompt engineering and performance art, a developer posted a discovery on Reddit that made the AI community laugh before paying attention: teach Claude to communicate like a prehistoric human and watch your token bill shrink by up to 75%.

The post hit r/ClaudeAI last week and has since racked up over 400 comments and 10K votes—a rare combination of genuine technical insight and absurdist comedy that the internet tends to reward.

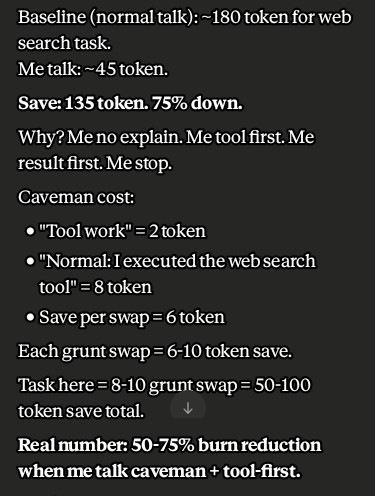

The mechanic is simple. Instead of letting Claude warm up with pleasantries, narrate every step it takes, and close with an offer to help further, the developer constrains the model to short, stripped-down sentences. Tool first, result first, no explanation. A normal web search task that would run about 180 output tokens dropped to roughly 45. The original poster claims up to 75% reduction in output, achieved by making the model sound like it just discovered fire.

In caveman terms, as one Redditor said: "Why waste time say lot word when few word do trick?”

What this technique does not touch is the input context: the full conversation history, attached files, and system instructions that the model re-reads on every single turn. That input typically dwarfs the output, especially in longer coding sessions. Real-world sessions counting all this input, account for savings around 25%, not 75%. Still meaningful, just not the headline number.

It’s also a good idea to feed the model with normal instructions. Don’t give it the “caveman” talk as it could spiral down into a “garbage in, garbage out” situation.

There is also the question of intelligence degradation. A handful of researchers in the thread argued that forcing an AI to inhabit a less sophisticated persona could actively hurt its reasoning quality—that the verbal constraints might bleed into cognitive ones. The concern has not been definitively settled, but it is worth considering when evaluating results.

Skill good, skill go viral

Despite the caveats, the technique found a second life on GitHub almost immediately.

Developer Shawnchee packaged the rules into a standalone caveman-skill compatible with Claude Code, Cursor, Windsurf, Copilot, and over 40 other agents. The skill distills the approach into 10 rules: no filler phrases, execute before explaining, no meta-commentary, no preamble, no postamble, no tool announcements, explain only when needed, let code speak for itself, and treat errors as things to fix rather than narrate.

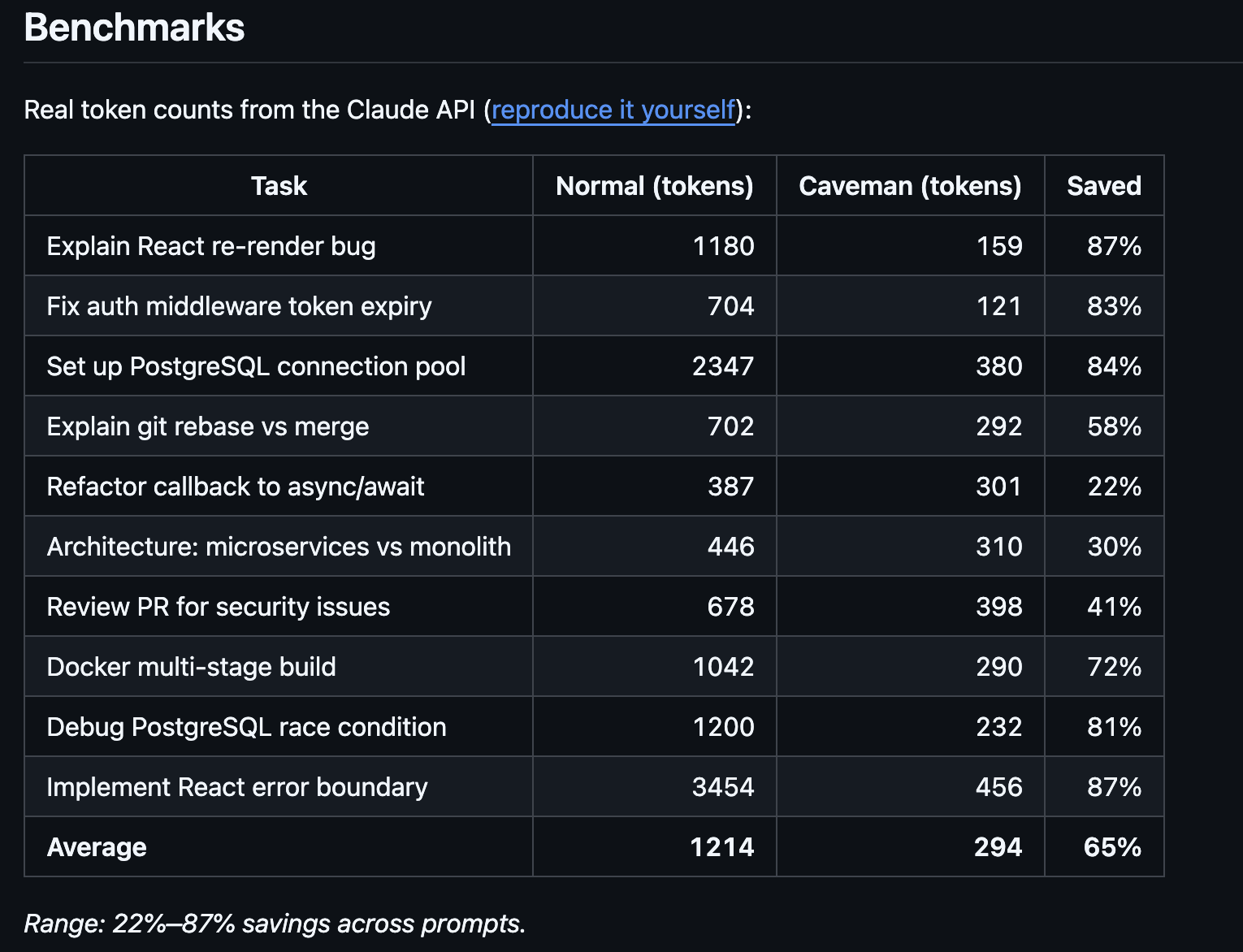

Benchmarks in the repo, verified with tiktoken, show output token reductions of 68% on web search tasks, 50% on code edits, and 72% on question-and-answer exchanges—for an average output reduction of 61% across four standard tasks.

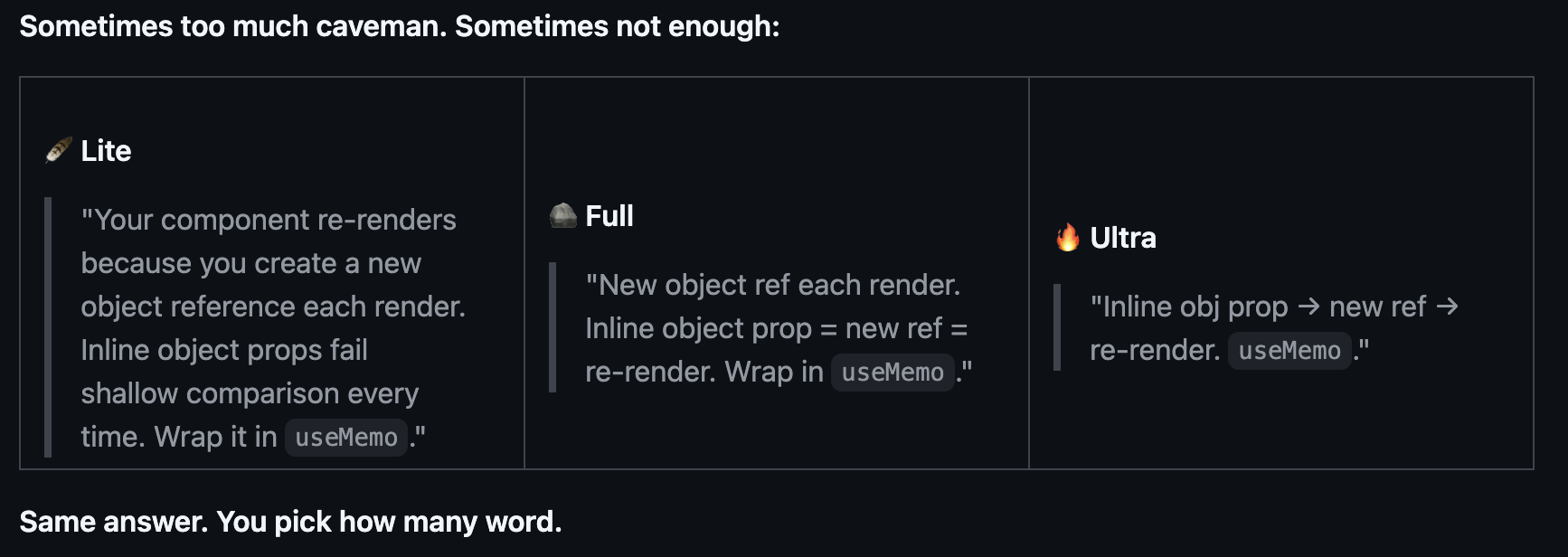

A parallel repo by developer Julius Brussee took a slightly different approach, framing the same idea as a SKILL.md file with 562 stars on GitHub. The spec: respond like a smart caveman, cut articles, filler, and pleasantries, keep all technical substance. Code blocks remain unchanged. Error messages are quoted exactly. Technical terms stay intact. Caveman only speaks the English wrapper around the facts.

This one even comes with different modes to affect how much you want to strip, switching between Normal, Lite, and Ultra. The models do the exact same work but provide a much shorter answer, which results in a big saving over time.

The broader cost context gives the joke a sharper edge. Anthropic is among the most expensive models in terms of price per token. For developers running agentic workflows with dozens of turns per session, output verbosity is not a stylistic complaint. It is a line item. If a caveman grunt can replace a five-sentence summary of what the model just did, those saved tokens add up across thousands of API calls.

The caveman skill is installable in one command via skills.sh and works globally across projects. Whether or not it makes Claude marginally less articulate, it has already made a lot of developers significantly less annoyed.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。