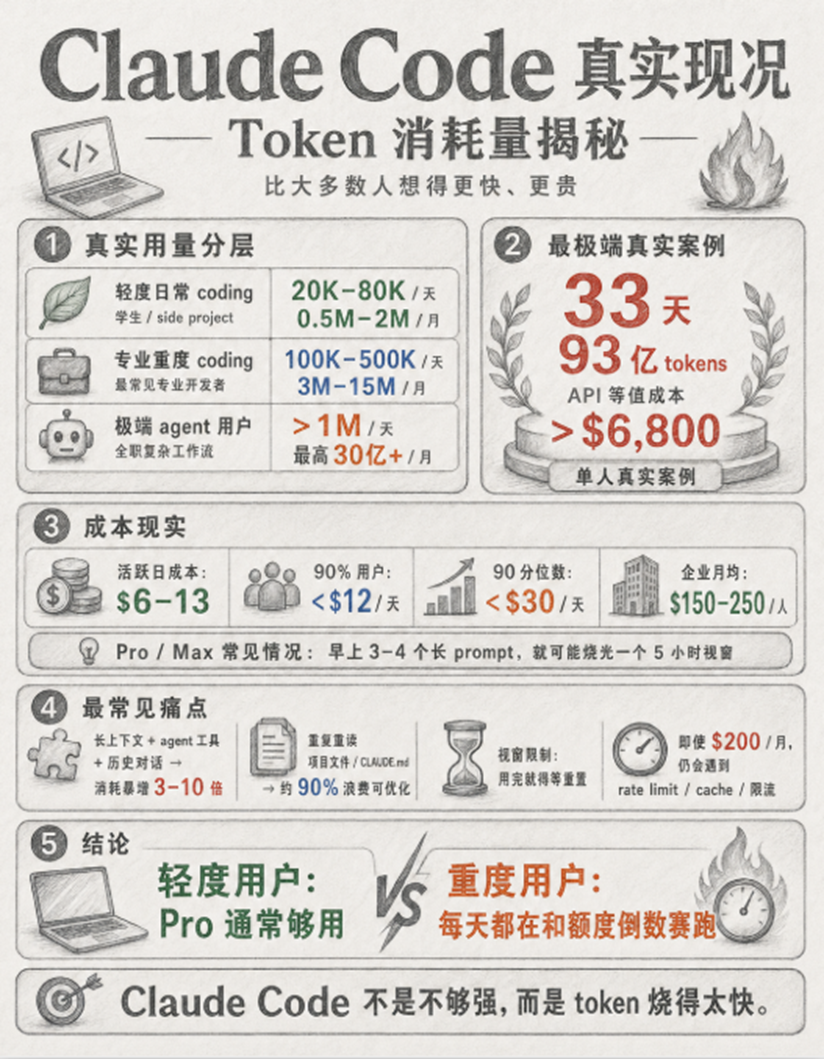

The Real Situation of Claude Code: Token Consumption Data Revealed

According to the 2025–2026 Reddit, X (Twitter), corporate deployment reports, and official data from Anthropic, the token consumption of most Claude Code users far exceeds expectations:

- Light daily coding users: 20K–80K tokens per day, approximately 5M–2M tokens per month.

- Professional heavy coding users: 100K–500K tokens per day, 3M–15M tokens per month.

- Extreme agentic power users: Easily exceeds 1M tokens per day, with a maximum of over 3 billion tokens in a single month (a real case where an individual burned 9.3 billion tokens in 33 days has already occurred).

Although Anthropic's Pro/Max subscriptions offer "window allowances every 5 hours," in actual use, there are often instances of "burning through an entire window with just a few long prompts in the morning." Many developers on X complain: "Paid $200 monthly fee, yet still have to watch the rate limit countdown." More tricky are issues related to prompt caching efficiency and long context history. Each time a tool is called, project files are read, or the previous session is continued, a large number of input tokens are repeatedly consumed. Corporate reports indicate that 90% of token waste actually comes from "duplicate content that can be optimized but hasn't been optimized."

This is where WorldRouter can play a significant advantage, as it not only provides one-time buyout AI token credits but can also route multiple top models simultaneously (including ultra-low-cost flash and coder series), and features a more flexible caching mechanism, greatly reducing the credits consumed for the same coding tasks, applicable to any mirror station transfer station.

The Best Solution for Not Being Able to Use Large Models in the Country

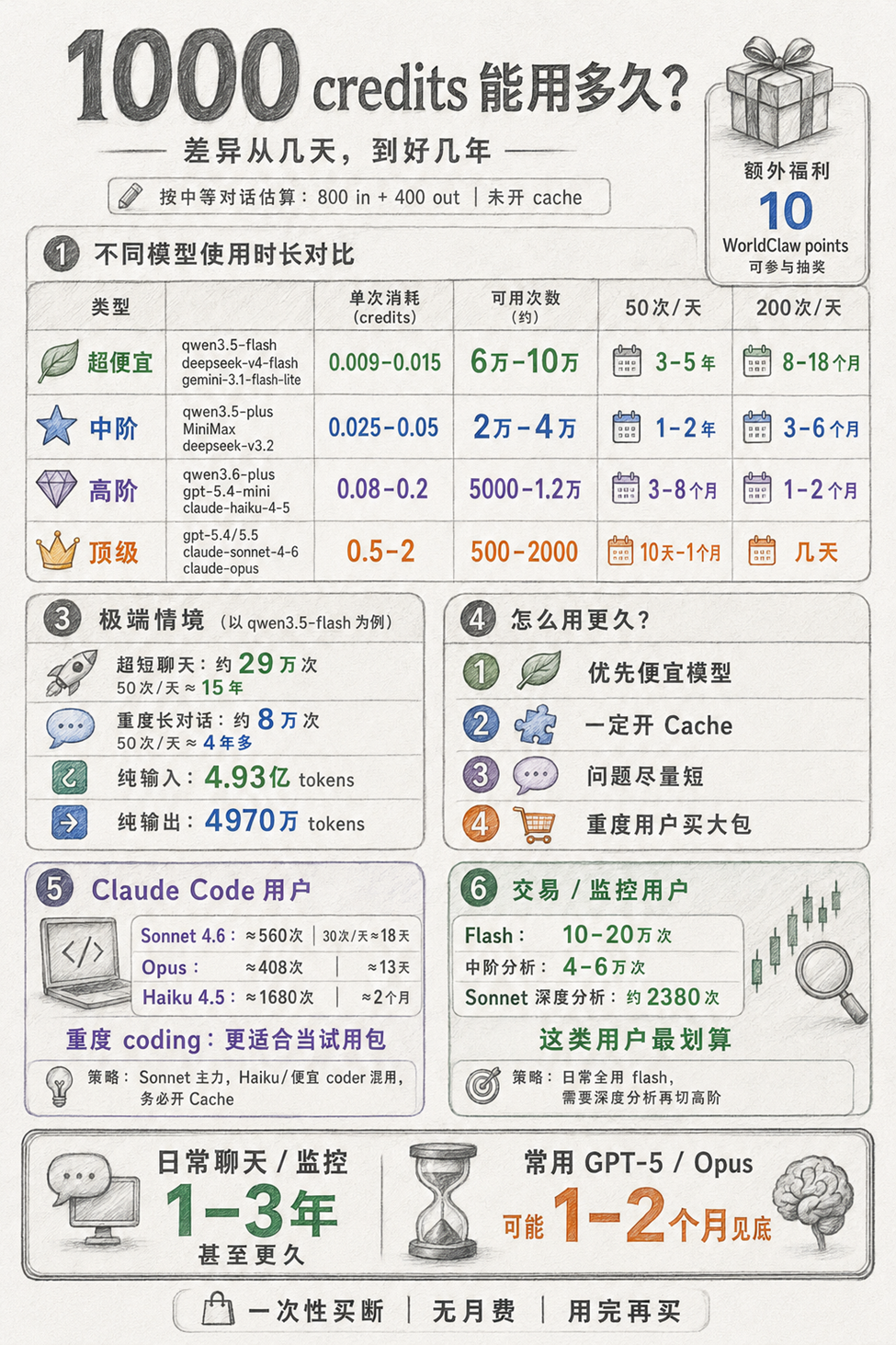

The greatest advantage of WorldRouter is that it can maximize the value of 1,000–1,000,000 credits through smart routing among multiple models and flexible caching according to the actual needs of different users. Below are detailed recommended strategies for four common user types.

Due to well-known internet restrictions, most overseas top models such as Claude, GPT-5, and Gemini cannot be accessed directly and stably. Even through proxies, there are often issues such as IP blocking, difficulty registering API keys, recharge difficulties, slow speeds, and high costs. Many developers are therefore forced to use domestic models, sacrificing the coding capabilities of Claude Opus/Sonnet.

Here, we will first provide a straightforward initial user tutorial before discussing the solutions and usage.

(The following tutorials are also applicable to other small proxy stations)

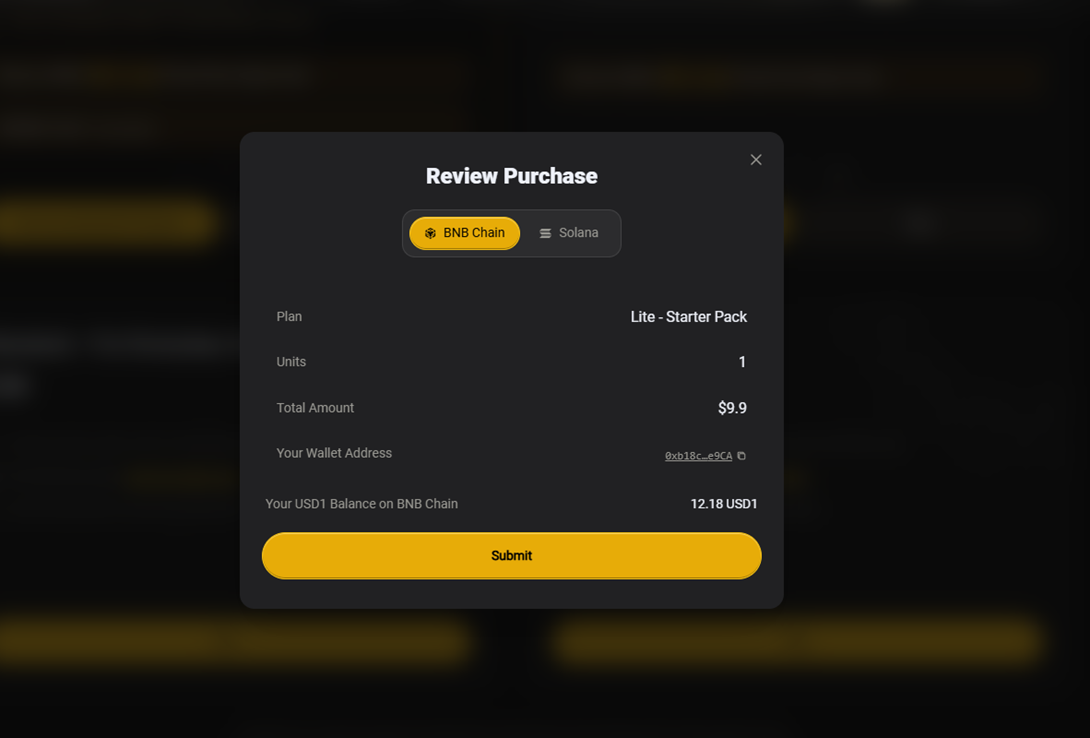

- 1. Register a wallet and recharge it with USD 1.

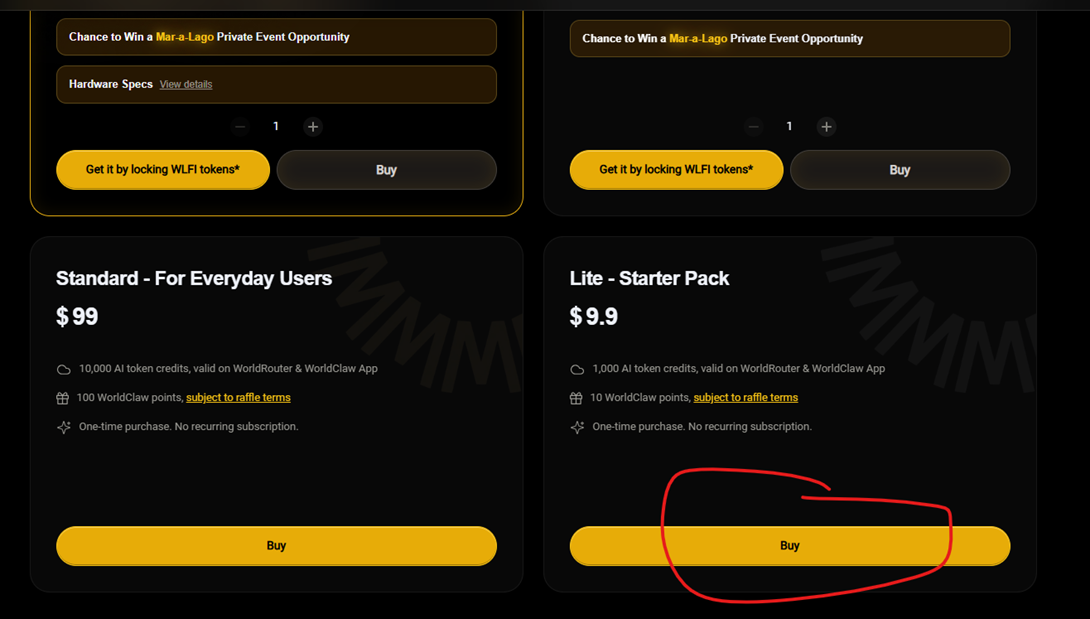

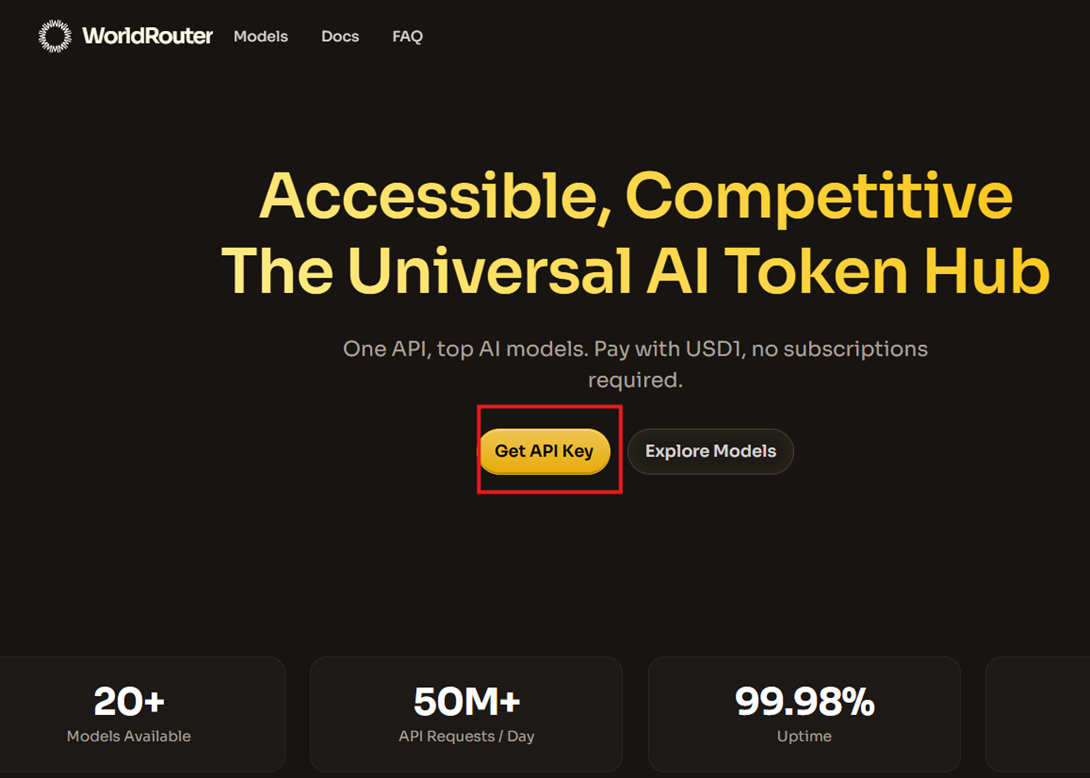

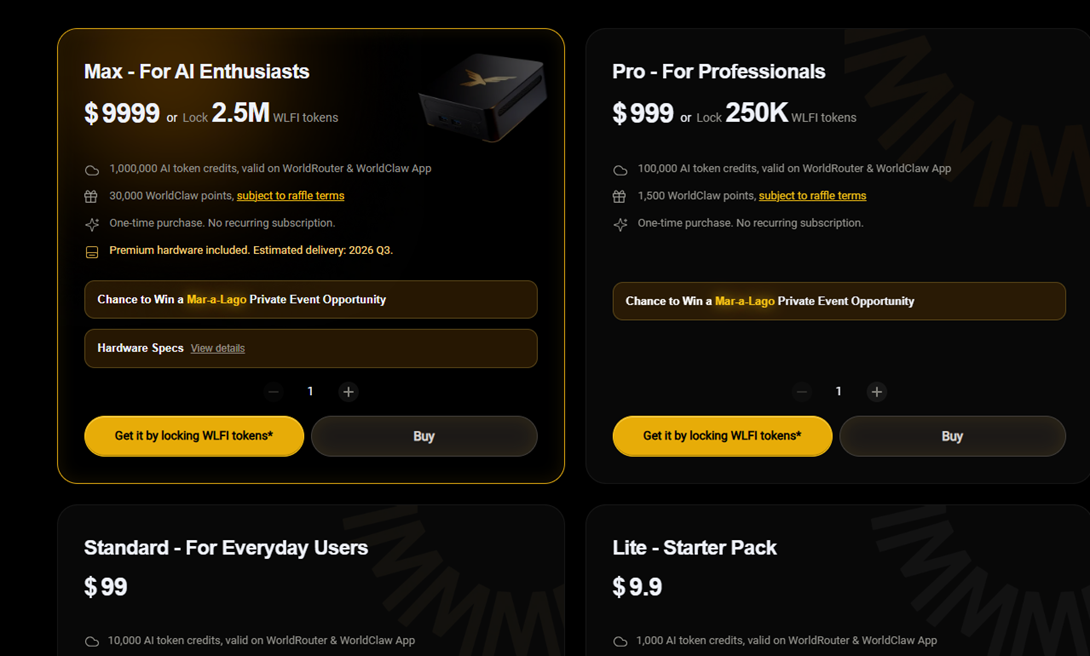

- 2. Go to https://worldclaw.ai/#world-router, scroll down to the bottom, and select a plan to purchase (new users are recommended to buy the cheapest one).

- 3. Set the API KEY, scroll up, and you will see this.

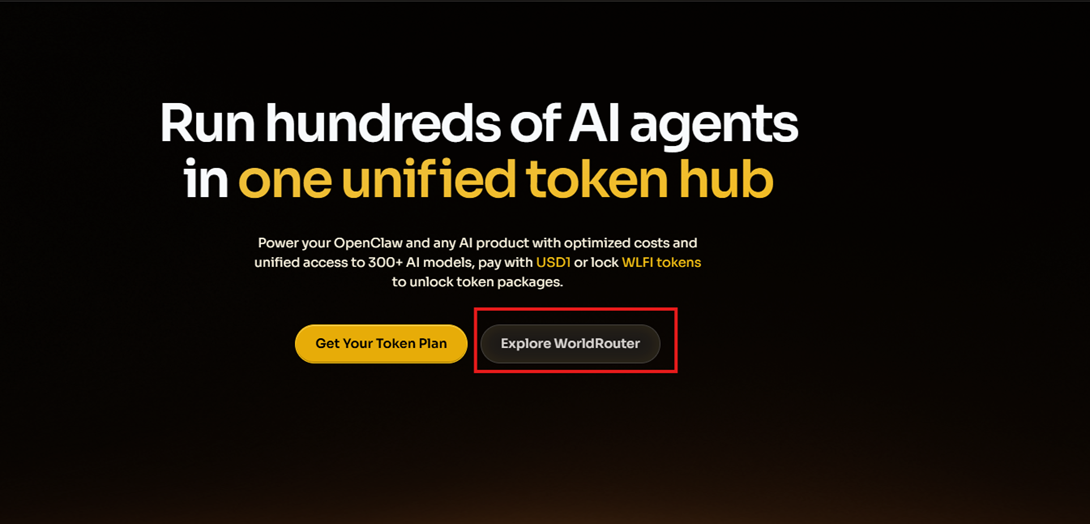

- Click Explore WorldRouter, and at this point, you will enter this page.

- Click on the left side

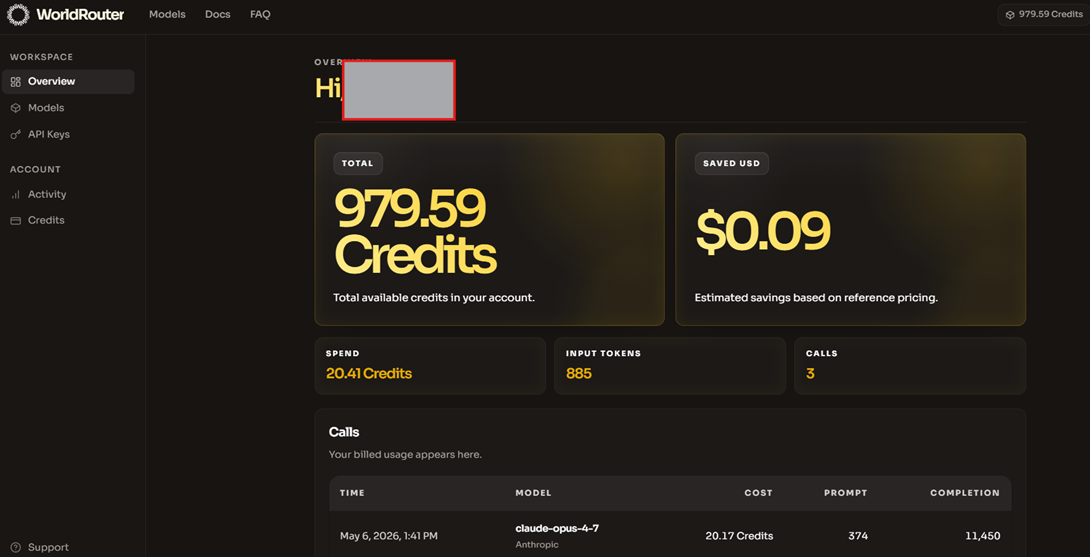

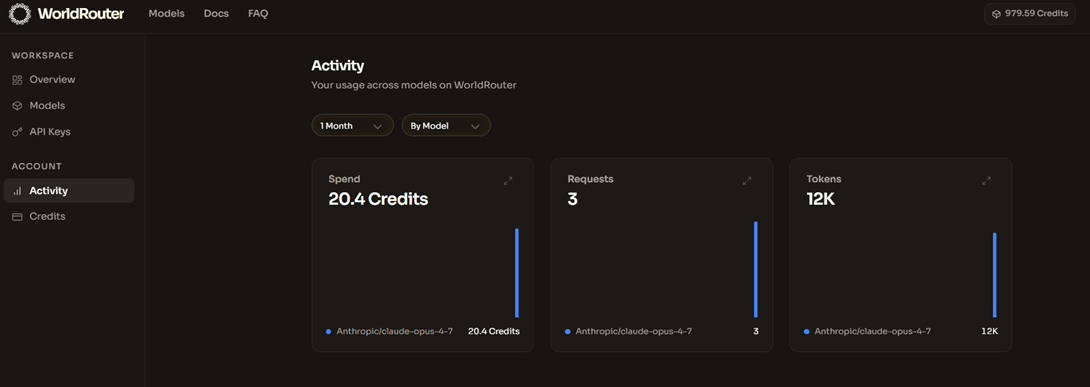

- Next, you'll jump to the control panel, which will show your remaining credits, saved money, and the models called; of course, since you haven't started using it yet, you should only see 1000 points.

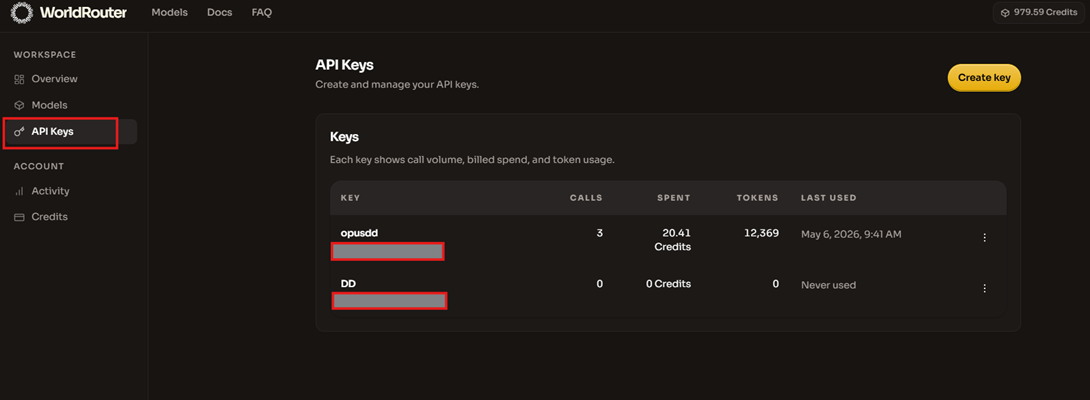

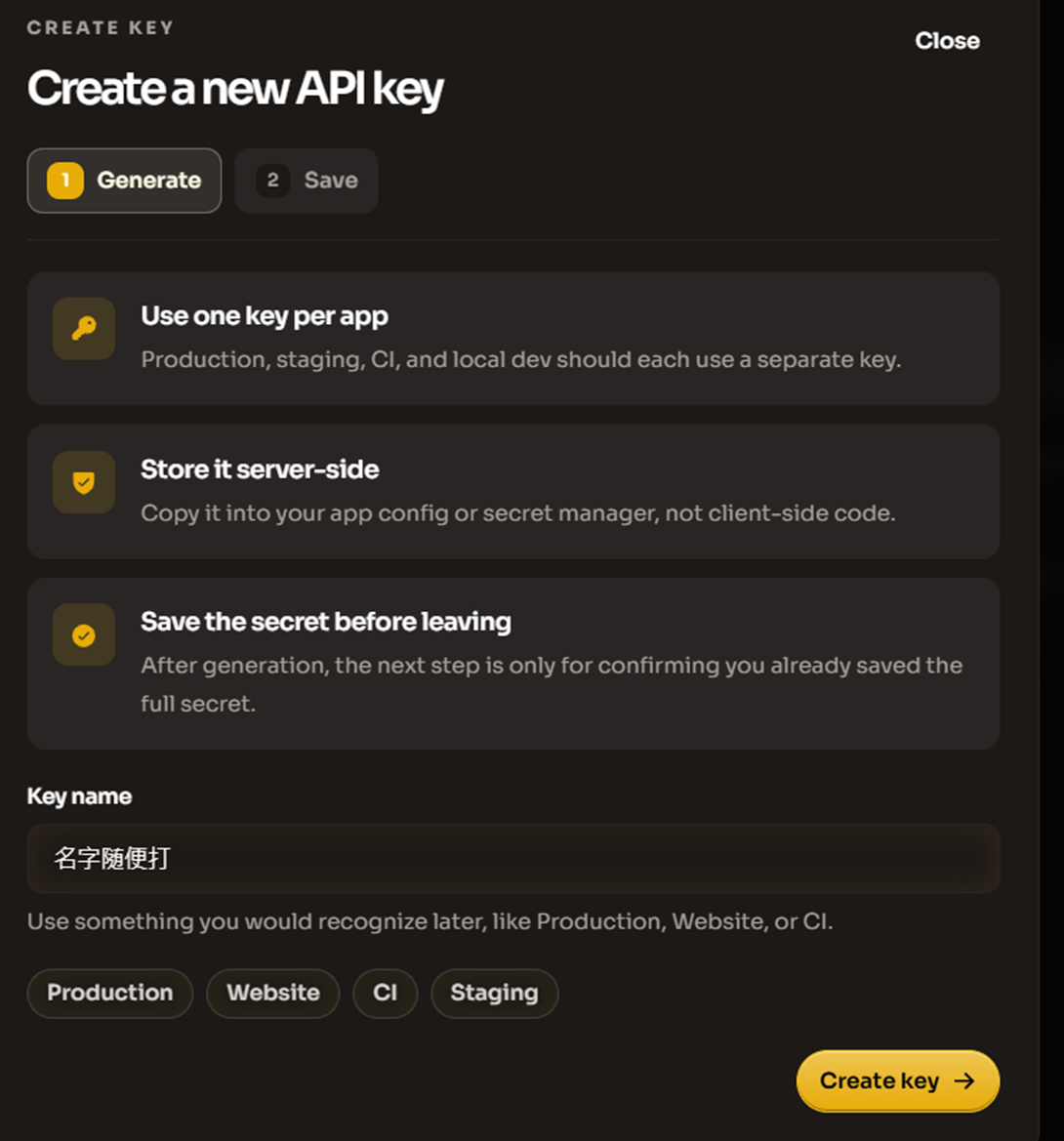

- Next, let's go to the api keys section where you can create api keys.

- Then click the yellow button in the top right corner to create keys.

- Feel free to name it.

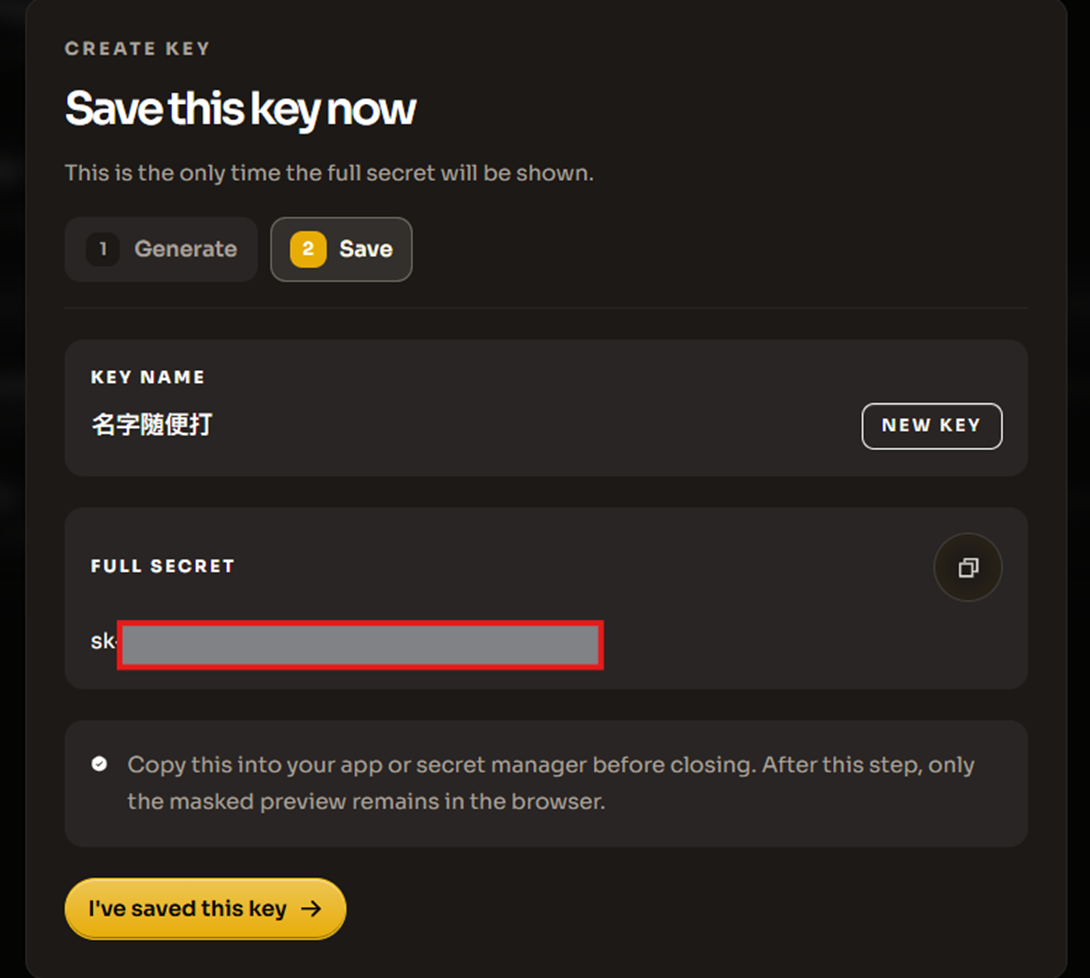

Then you will receive a key beginning with sk, and it’s time to start using it.

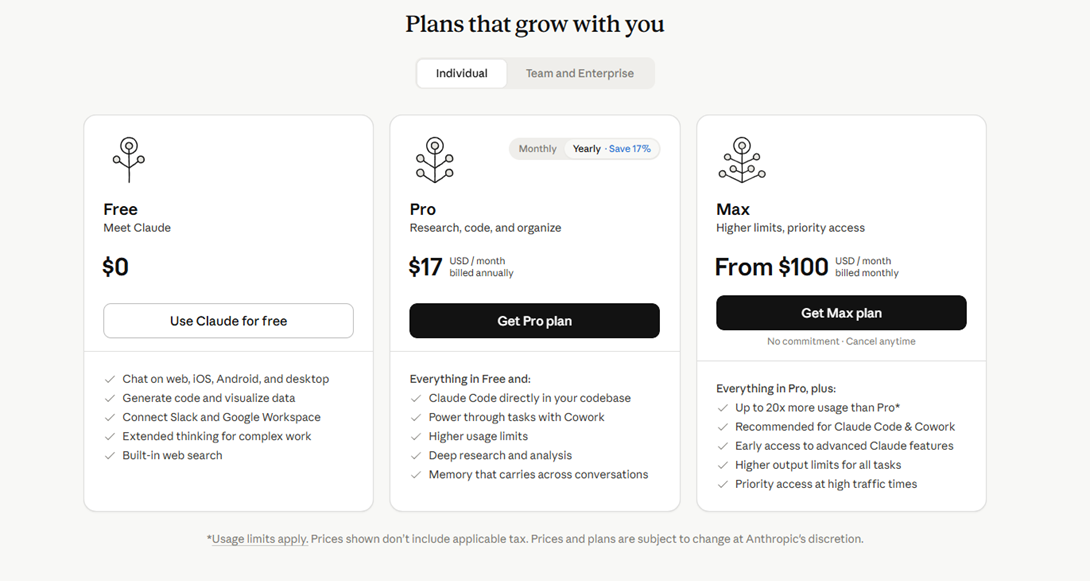

The most popular large model currently is Claude, and the latest model, Opus 4.7, theoretically costs $17 or $100 to play with on the official site, but with openrouter you can use it freely without spending 10 dollars.

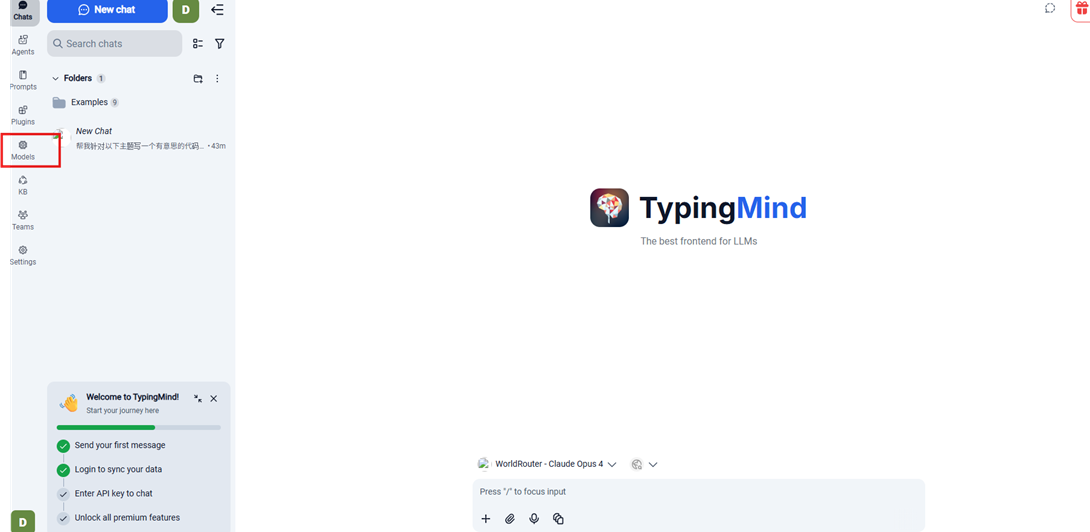

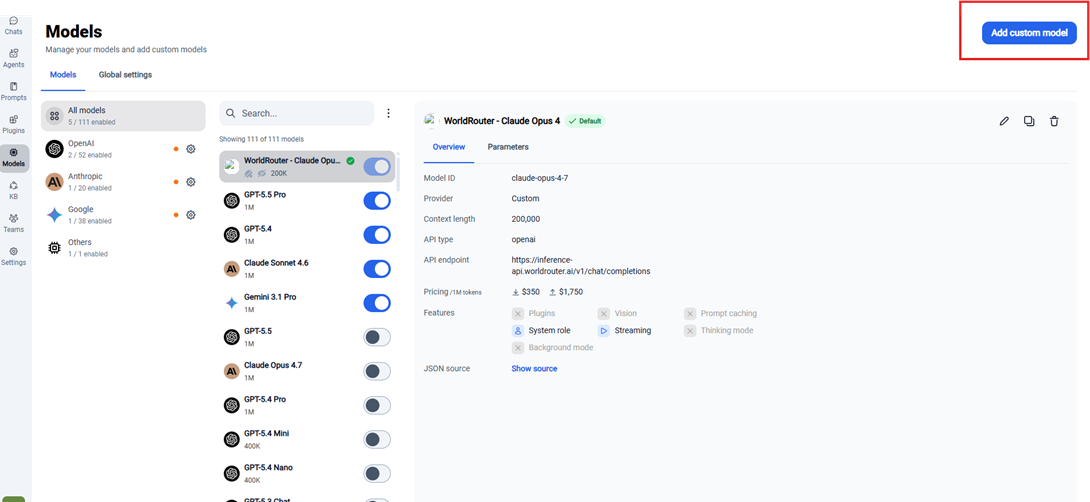

Next, I will share how to use it. First, we will go to the largest mirror site https://www.typingmind.com/.

- Click on the models on the side.

- Then click on the top right corner.

Next, enter the parameters.

1. Basic Configuration

Name: WorldRouter Claude Opus 4

API Type: OpenAI Chat Completions API

Endpoint URL: https://inference-api.worldrouter.ai/v1/chat/completions

If your platform automatically fills chat completions, you can change it to -https://inference-api.worldrouter.ai/v1

Model ID: claude-opus-4-7

Context Length: 200000

Icon URL: can be left blank or filled in - https://upload.wikimedia.org/wikipedia/commons/thumb/8/8a/Anthropic_Logo.svg/512px-Anthropic_Logo.svg.png

Pricing

- Input tokens: 350

- Output tokens: 1750

This is just used to estimate costs and does not affect whether the model can be used.

2. Authentication Settings

Authentication Type

API Key via HTTP Header

Header Key

Authorization

Header Value

Bearer skyour complete API key

Note that there must be a space after Bearer, followed by your sk key.

Correct format example: Bearer sk-proj-xxxxxxxxxxxxxxxxxxxxxxxx

(Do not add other text, do not omit Bearer)

3. If the platform is API Key field mode

Some platforms do not fill Header Value but only give you the API Key field. In this case, you usually just need to paste the plain key (sk your complete API key) without adding Bearer.

4. Testing Method

After filling everything out, click the Test button at the bottom right. If a green success prompt appears, or the model responds normally, it indicates successful setup. After success, click Add to start using it.

5. Common Errors

- Error 401: Usually indicates an incorrect key, or the Header Value is missing Bearer; please check if the format is Bearer sk your complete API key

- Endpoint error: Please confirm that the Endpoint URL is https://inference-api.worldrouter.ai/v1/chat/completions; if your platform automatically fills chat completions, only then use https://inference-api.worldrouter.ai/v1

- Model not found: Please confirm that the Model ID is filled as claude-opus-4-7

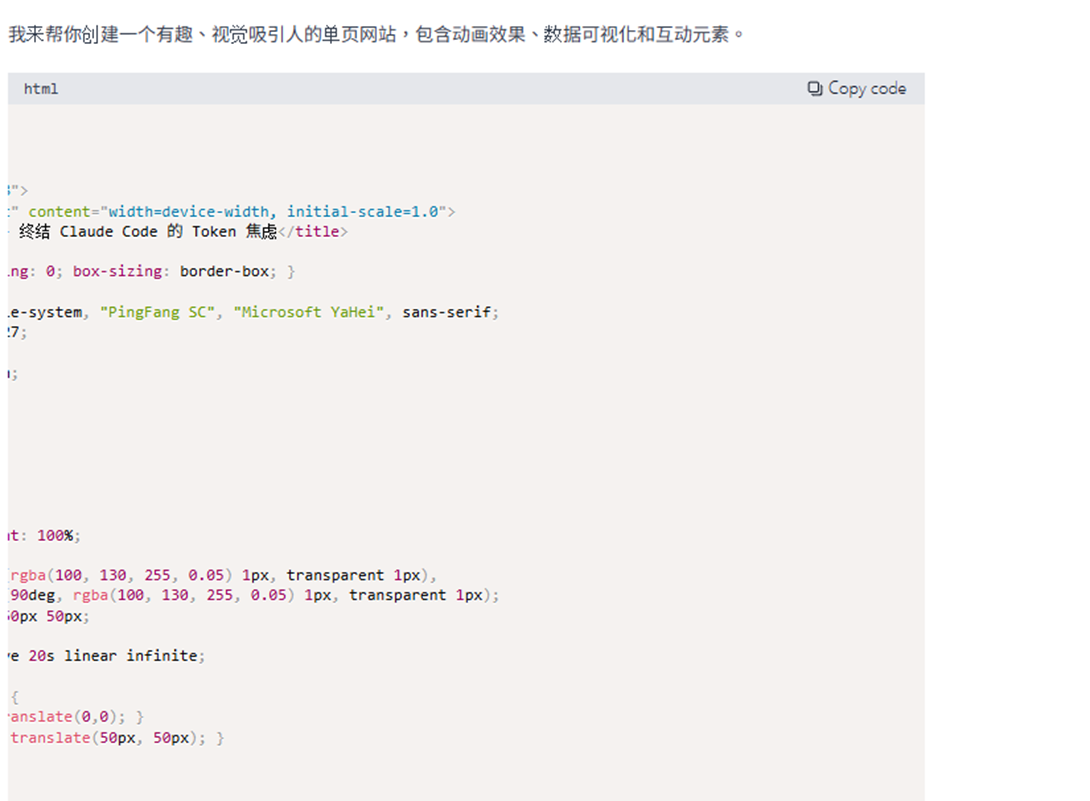

Then you can run smoothly.

After running, you will see

how many credits were consumed. Since writing web pages is relatively complex, testing showed it consumed about 20.4 credits, which is the basic application. Of course, some people are not satisfied with the basics, and whether it is LiteLLM or other open-source projects, World Router seamlessly supports.

However, since this is a proxy, we still need to leverage our advantages, as World Router has these advantages.

Cost Control:

High-level models (like GPT-5) perform the best but are costly. For basic tasks (such as summarization, classification), using lower-level models (like GPT-4o mini) or even local models is sufficient and can greatly reduce expenses.

Service Availability:

Cloud services are not always stable. OpenAI occasionally encounters API throttling, server anomalies, and delays due to regional or peak hours, making having alternative models a safeguard.

Diversity of Model Needs:

Different models may exhibit significant differences in performance across different languages or tasks. For example, some models may perform better after fine-tuning for Chinese comprehension or generation tasks, while certain models may excel at multi-step logical reasoning tasks. This variability is why applications need to possess the ability to flexibly switch models.

Compliance and Privacy Considerations:

Some data (such as financial reports, personal data) is restricted by regulations or corporate policies and cannot be processed in the cloud, requiring reliance on local deployment solutions to ensure data security.

System Flexibility and Measurability:

During development, it is often necessary to compare the behavioral differences of different models or assess result quality through A/B testing. Quickly replacing or mixing models will greatly enhance the efficiency of system experimentation and optimization.

The emergence of WorldRouter not only simplifies the complexity of LLM integration but also allows cloud and local models to work collaboratively in the same coexistence environment, making it an indispensable foundational tool when constructing multi-model AI applications. Next, we will actually demonstrate how to operate combinatorially.

Strategies for Heavy Coding Users, Transaction Monitoring Users, and General Developers

1. Claude Code Heavy Coding Users (Coding Time Over 4 Hours Daily)

These users are typically full-time developers, AI Agent engineers, or team Tech Leads, engaging in a lot of long-context coding, project refactoring, tool calls, debugging, and architectural design daily. According to real data from the internet, such users consume between 100 K–500 K tokens per day (extremists can reach 1 M+).

Recommended Model Routing Strategy:

Main model: claude-sonnet-4-6 (strongest inference, best coding performance);

Auxiliary models: qwen3-coder-plus (quick boilerplate, simple debug), deepseek-v4-flash (ultra-low-cost real-time testing);

Switching rules: Long file analysis, complex architecture, agent workflows → Always use Claude Sonnet 4.6;

Quick Q&A, single file modifications, repeated boilerplate → Automatically route to qwen3-coder-plus or deepseek-v4-flash (saving 80–90% of credits);

Cache settings: Must be fully enabled, during long coding sessions, cache reading of CLAUDE.md, project files, and historical conversations can reduce input costs to one-tenth of the original.

Plan Recommendations and Lifespan Estimation (Average 2,500 input + 1,200 output tokens per coding round):

- Pro (100,000 credits);

- In a pure Claude Sonnet scenario, it can be used for about 2–4 months (30–50 long coding sessions per day);

- Using flash/coder mixed with cache enabled can extend it to 6–10 months.

- Max (1,000,000 credits);

- In a pure Claude Sonnet scenario, it can be used for about 1.5–2.5 years;

- Using flash/coder mixed with cache, it can easily last 3–5 years (or even longer).

Practical Operation Tips: Establish a dedicated "CLAUDE.md" and keep it concise (5,000 tokens). Before the end of each session, use /cleanup or manually remove useless history. Set WorldRouter routing preferences: “coding tasks prioritize Sonnet, other tasks prioritize the lowest price.”

Extremely heavy users are advised to directly lock in the Max plan, as the included Premium hardware and Mar-a-Lago raffle opportunity offer greater long-term value.

2. Transaction & Monitoring Users (Market Watching, Strategy Analysis, Real-time Data Interpretation)

These users typically have high-frequency but short prompts: They may check a ticker, indicators, risk assessments, or run simple backtests every minute; daily query counts are high (50–200 times), but each conversation is extremely short (below 400 input + 200 output tokens), belonging to a “quick Q&A” context.

Recommended Model Routing Strategy:

Main models: deepseek-v4-flash, qwen3.5-flash, gemini-3.1-flash-lite (fastest speed, lowest price)

Auxiliary models: claude-sonnet-4-6 (only used for complex strategy design, mathematical models, and risk backtesting)

Switching rules: Daily monitoring, real-time data interpretation, simple chart analysis all use the flash series; only switch to Claude for deep reasoning when needed.

Plan Recommendations and Lifespan Estimation (Average 400 input + 200 output per conversation)

- Standard (10,000 credits)

- Pure flash scenario: Approximately 1.5–3 years of usage (100 times per day is completely sufficient);

- Occasional mixing with Claude: Can still last 10–18 months.

- Pro (100,000 credits):

- Pure flash scenario: Easily lasts 15–30 years (essentially a one-time purchase until retirement);

- Mixing Claude at a 10% rate: Can still last 4–8 years.

Practical Operation Tips: After enabling cache, repeatedly querying the same ticker or indicator consumes almost no credits. Write frequently used indicators as fixed prompt templates to let WorldRouter automatically cache them.

Set Routing Preferences:

- "Speed priority + lowest price," allowing the system to automatically select the cheapest flash model.

- This type of user gets the best value, with the Standard plan almost equivalent to "buying a lifetime usage" level.

3. General Developers (Daily Coding + Learning + Occasional Projects)

These users code 1–3 hours each day, including learning new technologies, writing side projects, simple debugging, and reading documents.

The token consumption is moderate, about 20 K–80 K daily.

Recommended Model Routing Strategy:

Main models: qwen3.5-plus / deepseek-v3.2 (best cost-performance ratio);

Auxiliary models: claude-sonnet-4-6 (used during significant architecture design or review), claude-haiku-4-5 (for ultra-quick small tasks);

Switching rules: 80% of tasks utilize mid-tier models, only switching to Claude during critical moments.

Plan Recommendations and Lifespan Estimation (Average 1,200 input + 600 output per conversation)

- Standard (10,000 credits)

- Mid-tier model + cache enabled: Approximately usable for 8–14 months;

- Occasional mixing with Claude: Can still last 5–9 months;

- Pro (100,000 credits)

- Mid-tier model + cache enabled: Approximately usable for 6–10 years;

- Mixing Claude at a 20% rate: Can still last 3–5 years.

Practical Operation Tips:

- Make good use of WorldRouter's "smart routing" feature, setting the "budget priority" mode.

- Regularly check credits consumption reports and adjust model usage ratios.

- The Lite plan is suitable for trying out for 1–2 weeks, and upgrade to Standard after confirming habits.

This introduction ends here. In fact, regardless of your user type, WorldRouter can significantly extend the lifespan of credits through "model mixing + caching."

Heavy coding users are suitable for Pro/Max, transaction monitoring users might not even finish the Standard plan, and general developers find the Standard plan sufficient for long-term use. Of course, if you really need to use a lot or want to see an idol, then MAX is also a great choice.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。